Caroline Chan, Shiry Ginosar, Tinghui Zhou and Alexei A. Efros from the University of Berkeley deliver with «Everybody Dance Now» not just an interesting one scientific paper but also hope for all movement dyslexics whose dance style is limited to foot and head rocking. Because in its technical experimental setup, artificial intelligence helps to reproduce the movement sequences of a dancer template as precisely as possible. You should at least roughly imitate the movements, the computer will do the rest. Of course it still looks rudimentary and not really round, but it's pretty impressive.

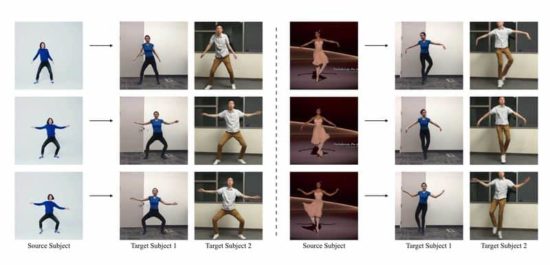

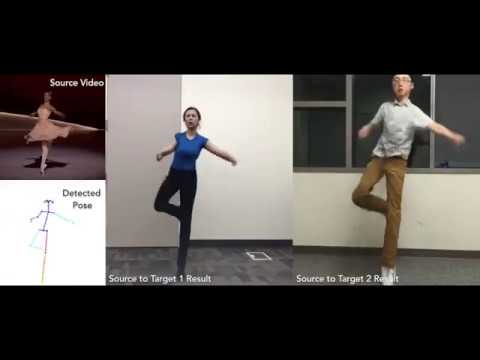

This paper presents a simple method for 'do as I do' motion transfer: given a source video of a person dancing we can transfer that performance to a novel (amateur) target after only a few minutes of the target subject performing standard moves. We pose this problem as a per-frame image-to-image translation with spatio-temporal smoothing. Using pose detections as an intermediate representation between source and target, we learn a mapping from pose images to a target subject's appearance. We adapt this setup for temporally coherent video generation including realistic face synthesis.

"Dravens Tales from the Crypt" has been enchanting for over 15 years with a tasteless mixture of humor, serious journalism - for current events and unbalanced reporting in the press politics - and zombies, garnished with lots of art, entertainment and punk rock. Draven has turned his hobby into a popular brand that cannot be classified.

"Dravens Tales from the Crypt" has been enchanting for over 15 years with a tasteless mixture of humor, serious journalism - for current events and unbalanced reporting in the press politics - and zombies, garnished with lots of art, entertainment and punk rock. Draven has turned his hobby into a popular brand that cannot be classified.